Table of contents

Week 2; Understanding sound and setting the scene for the final assessment

Week 3; Reflecting on the impact of technology in shaping the new era of music

Week 4; Testing and analysing different recording approaches

Week 5; Drums and their contribution to the birth of music genres

Week 6: What is the essence of a bassline ?

Week 7: Building a hook with leads and vocals

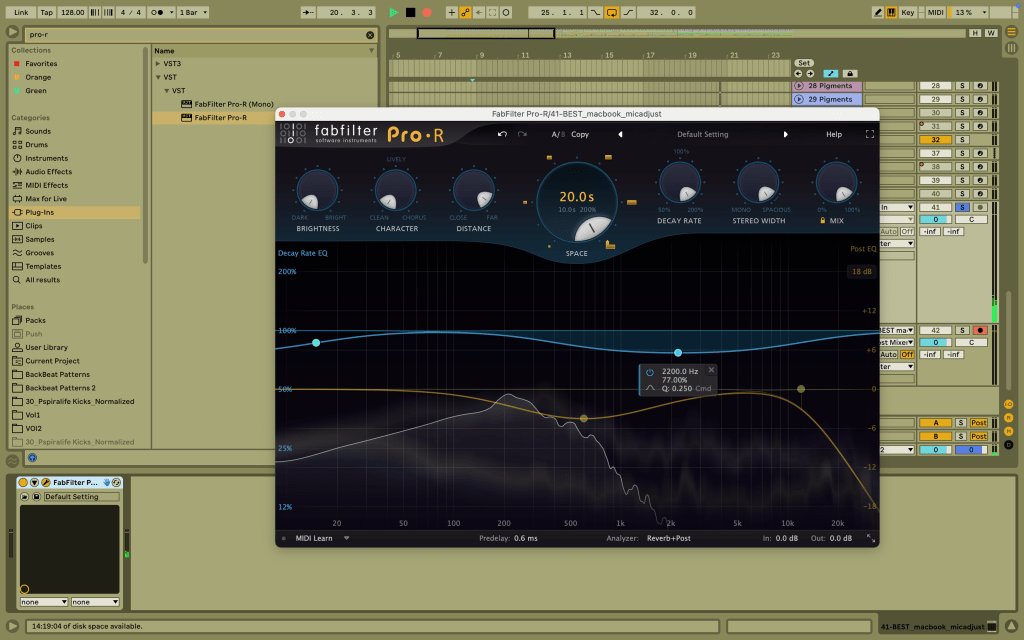

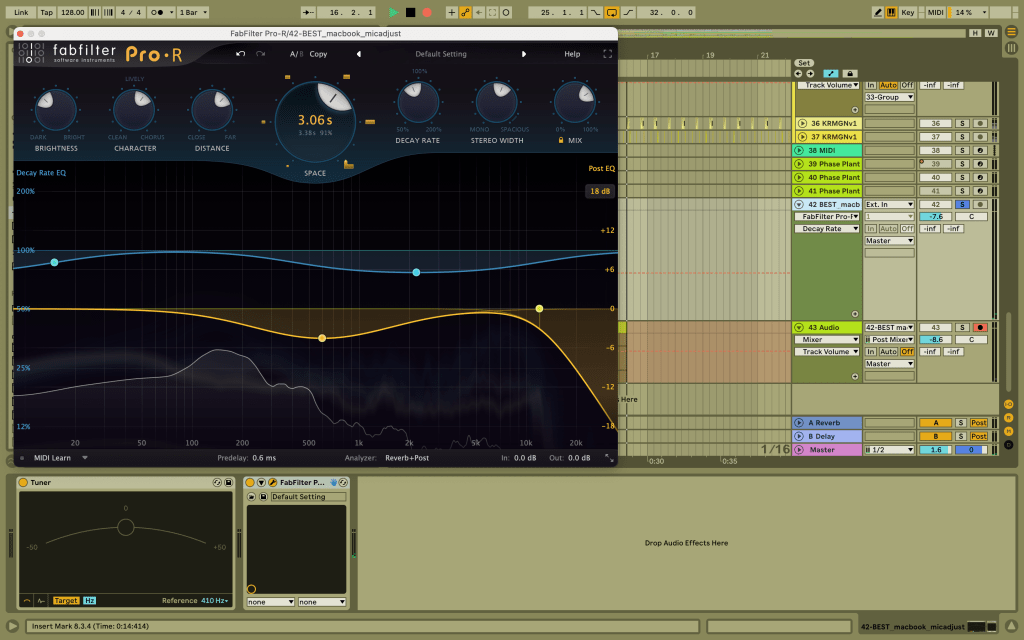

Week 8: Modulating depth and space with reverb

Week 2: Understanding sound and setting the scene for the final assessment

“Sound is a form of energy that propagates through a host medium in the form of longitudinal waves.”

Aim of the 1st session: To construct a simple ‘sound cannon’ and use the echo time to estimate the speed of sound.

Objectives:

- Assemble a simple sound cannon using a plastic cup and a balloon.

- Record a slow-mo video of the sound being generated with my phone, placed at a known distance to a wall

- Estimate the duration of the echo time.

- Calculate the speed of the sound.

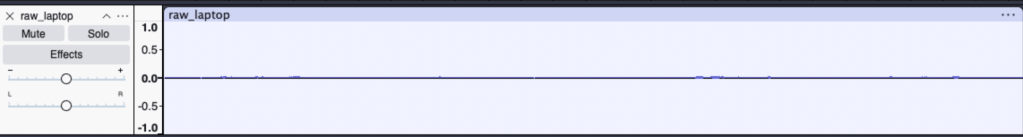

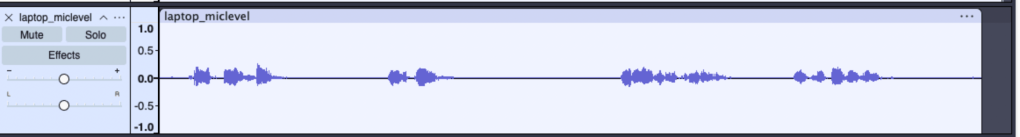

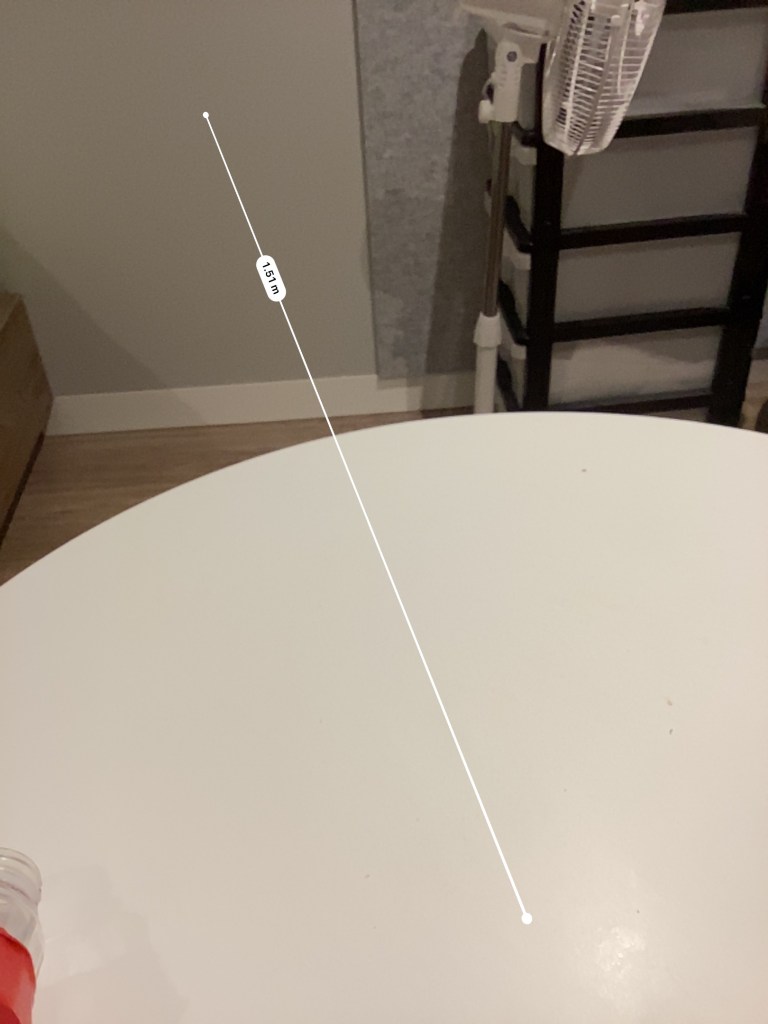

I have tried measuring the duration the pop sound takes to hit the wall (1.51m from my microphone) and bounce back again on my microphone( measuring the echo). The best time estimate is 0.73s. Using the equation speed of sound, v=d/t, v = 2(1.51m)/0.73s = 4.13 m/s, which is so clearly not the actual value. (c.f ~343 m/s in air)

Limitations in this experiment:

- The distance to hit the wall was too small and the sound frequency was too low to even hear an echo

- There were some obstacles along the direction of sound propagation that caused deflections.

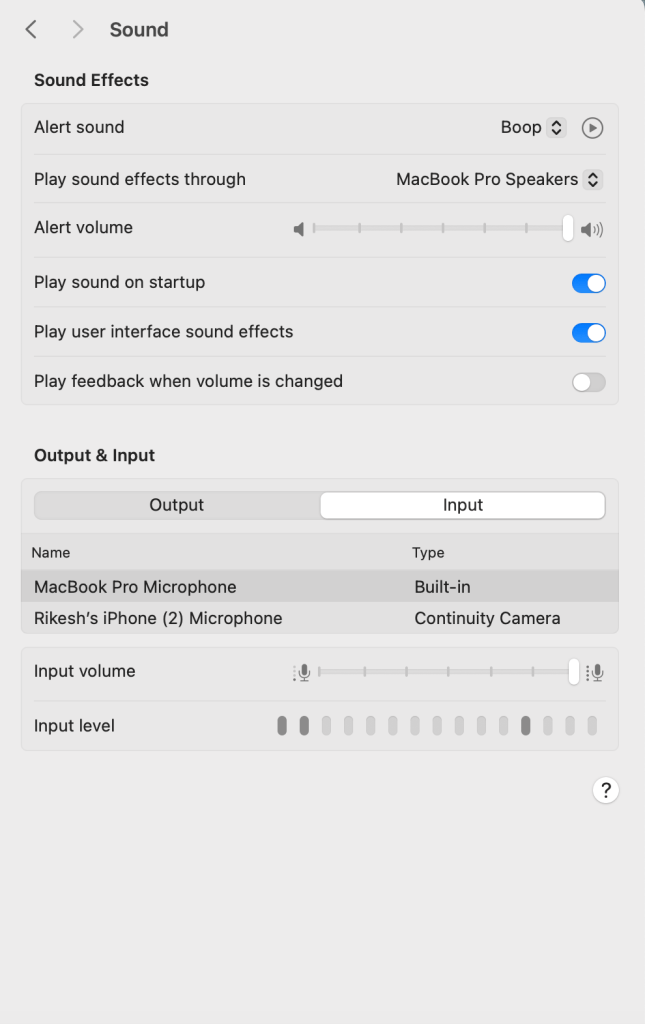

- I also could not properly measure the time as the time units on my phone video editor is limited to 0.01 s sensitivity, and the echo time for such a small distance was too small to even be accurately measured.

Update: My subject tutors pointed to the fact how frame-rate of video recordings and human error in calculation interfere with the results. A better approach would be to do this with a partner on the receiving end to detect the sound.

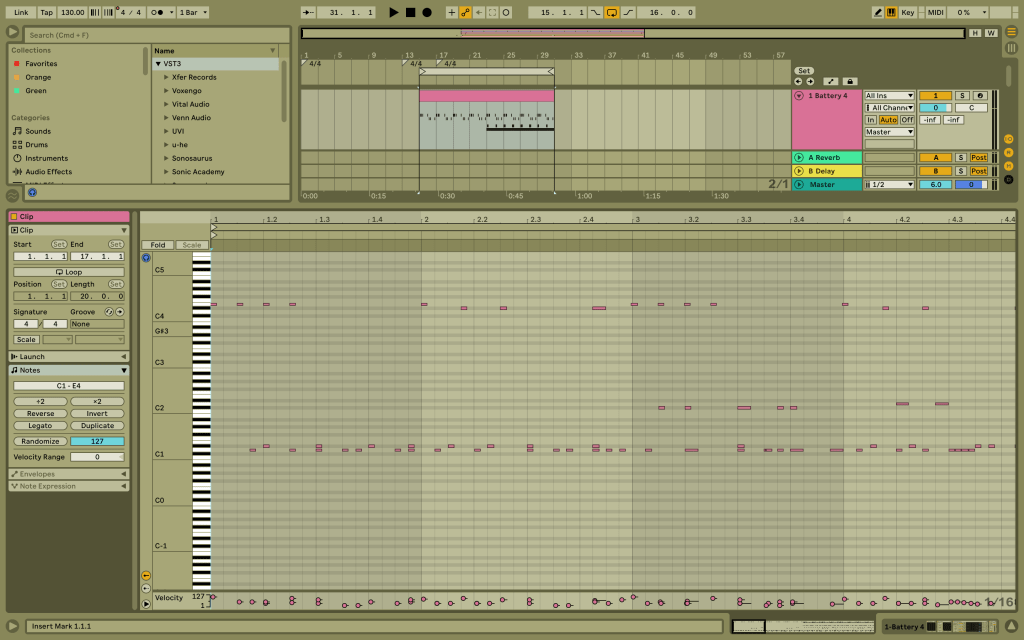

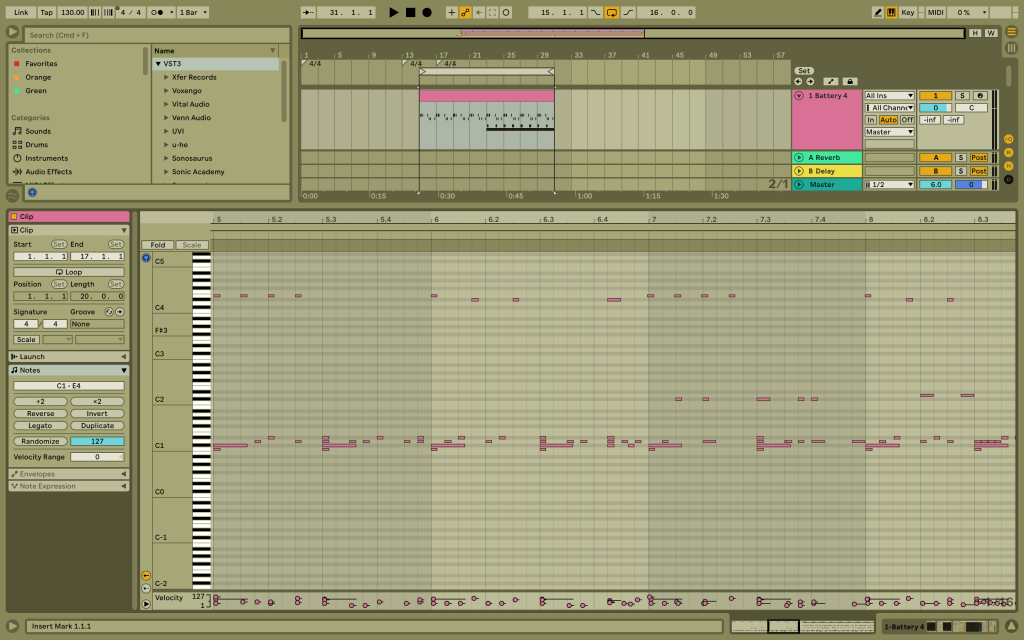

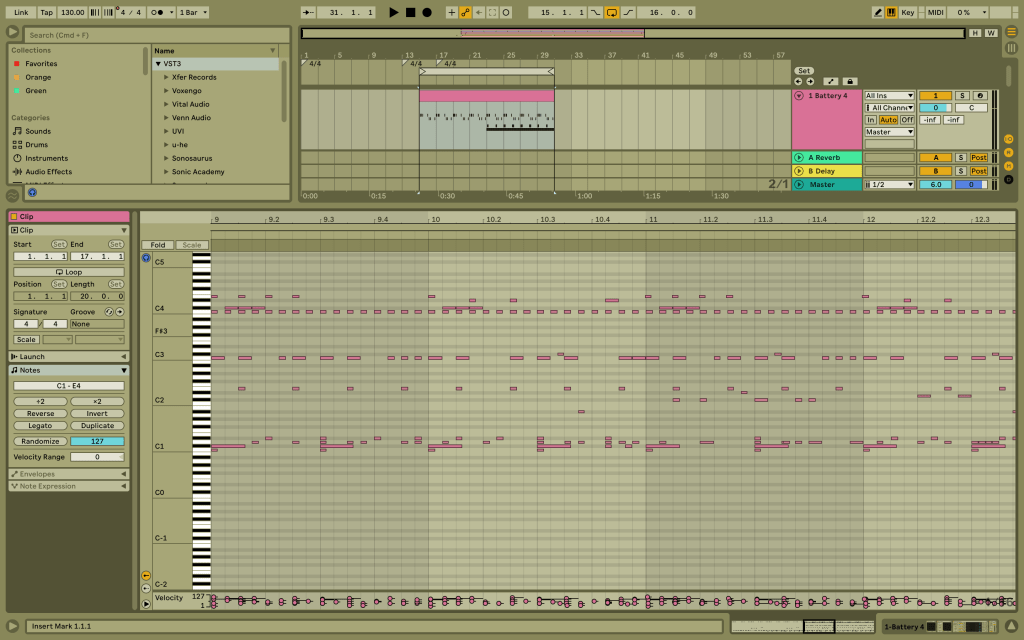

Aim of the 2nd session: To plan my track structure for the final assessment

I intend to produce a form of bouncy, groovy and “techy” track with some vocals that adds tension and directions to the flow.

I will be using the lyrics from the following track, but recorded in my voice. I will then produce a track using these recorded vocals and Ableton as my DAW.

The lyrics:

Deep in the faith…

In the groove…

Sway side to side, let your body move

I will update about my recording and sound design techniques throughout the upcoming blog posts. Stay tuned!